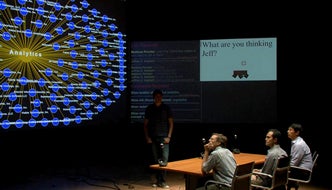

A group of four people walk into a room and the leader says, “Watson, bring me the last working session.” The computer recognizes and greets the group, then retrieves the materials used in the last meeting and displays them on three large screens. Settling down to work, the leader approaches one screen, and swipes his hands apart to zoom into the information on display. The participants interact with the room through computers that can understand their speech, and sensors that detect their position, record their roles and observe their attention. When the topic of discussion shifts from one screen to another, but one participant remains focused on the previous point, the computer asks a question: “What are you thinking?”

It’s a simple scene that illustrates a milestone in the development of Rensselaer and IBM Research, the Cognitive and Immersive Systems Laboratory (CISL) has reached that milestone, and is poised to advance cognitive and immersive environments for collaborative problem-solving in situations like board rooms, classrooms, diagnosis rooms, and design studios.

“This new prototype is a launching point—a functioning space where humans can begin to interact naturally with computers,” said Hui Su, director of CISL. “At its core is a multi-agent architecture for a cognitive environment created by IBM Watson Research Center to link human experience with technology. In CISL, we created this architecture to integrate technologies that register different kinds of human behavior captured by sensors as individual events and forward them to the cognitive agents behind the scene for interpretation. Enhancing this architecture will allow us to link new sensing technologies and computer vision technologies into the system, and to enable collaborative decision making tools on top of these technologies.”

CISL is developing its prototype “situations room” using Studio 2 in the Curtis R. Priem Experimental and Performing Arts Center (EMPAC).

The current capabilities of the space are rudimentary in comparison with human understanding. The room can understand and register speech, three specific gestures, the position of occupants of the room, their roles, and the spatial orientation of those occupants, triggering the correct cognitive computing agents to take action and bring data and information relevant to the discussion into the room in real-time. But the promise is clear.

“From this point, we can build the capability for better interpreting what happens in the room,” said Su. “Our architecture provides a framework for incorporating new technologies such as more cognitive computing capabilities that interpret human behavior. That allows us to really dig in to what people mean during a discussion, triggering the cognitive computing agents to bring valuable analysis and insights to the discussion. In terms of interpreting behavior, we are at the very beginning, but from here the terrain gets very interesting.”

CISL is developing its prototype “situations room” using Studio 2 in the Curtis R. Priem Experimental and Performing Arts Center (EMPAC). Studio 2 was designed as an “exceptionally versatile space for the integration of digital technology with human expression and perception,” and easily incorporates the technology CISL is creating. The prototype relies on several cognitive technologies developed by Rensselaer and IBM, as well as sensors—such as microphones, cameras, and Kinnect motion sensors—linked by the CISL architecture.

Within Studio 2, sensors detect human activity, such as a change in the position of an occupant of the room, speech, gesture, and head movement. Absent the CISL architecture, each of the cognitive technologies acts in solitude, responding to a specific activity detected by a single type of sensor and provided to the computer for interpretation. A sensor provides an input, and the computer provides an output. The interaction between human and machine is based on a single action with a finite duration.

The prototype drew upon numerous experts from Rensselaer and IBM Research, including: researchers from the lab of Rensselaer professor of electrical, computer, and systems engineering Qiang Ji; researchers from the lab of Rensselaer professor of cognitive science Selmer Bringsjord; researchers from the lab of Rensselaer professor of electrical, computer, and systems engineering Rich Radke; Gordon Clement from CISL; and IBM Researchers Jeff Kephart, Yunfeng Zhang, and Yedendra Shrinivasan.